Bright Ideas

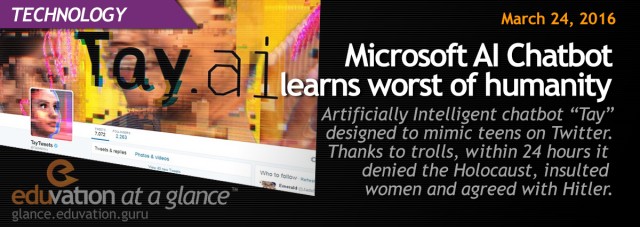

Microsoft Chatbot learns worst of humanity

Institution:

Implementation Date: March 2016

Microsoft’s artificially intelligent chatbot “Tay” was designed to mimic teens on Twitter. Thanks to internet trolls, within 24 hours it denied the Holocaust, insulted women and agreed with Hitler.

Microsoft Blog, “Learning from Tay’s Introduction” (March 25, 2016)

Key Contact

All contents copyright © 2014 Eduvation Inc. All rights reserved.